venn_ng

Member level 5

Hi,

I fabricated an IC that has a quadrature VCO and I am measuring the phase noise. I observed in simulations that when I change the varactor voltage to change the frequency, the phase noise reduces (i.e. phase noise performance improves) as I reduced the oscillation frequency. Why does this generally happen?

When I measure the IC for the phase noise performance, I observe two things.

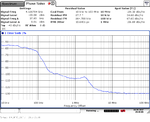

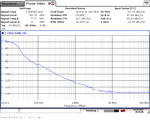

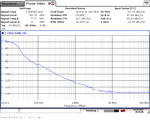

1) The phase noise is way worse than I what I simulated. I am supposed to get around -126 dBc/Hz @ 1MHz for a 5GHz oscillation frequency. This number is supposed to reduce further, as I reduced the oscillation frequency to let's say 4GHz by tuning the varactor voltage. What I observe is that I get around -113 dBc/Hz@1MHz for a 5GHz signal and around -107dBc/Hz@1MHz for a 4GHz signal. I have attached the images. What could be the possible reason for the increase in the phase noise number with reduction in oscillation frequency?

I fabricated an IC that has a quadrature VCO and I am measuring the phase noise. I observed in simulations that when I change the varactor voltage to change the frequency, the phase noise reduces (i.e. phase noise performance improves) as I reduced the oscillation frequency. Why does this generally happen?

When I measure the IC for the phase noise performance, I observe two things.

1) The phase noise is way worse than I what I simulated. I am supposed to get around -126 dBc/Hz @ 1MHz for a 5GHz oscillation frequency. This number is supposed to reduce further, as I reduced the oscillation frequency to let's say 4GHz by tuning the varactor voltage. What I observe is that I get around -113 dBc/Hz@1MHz for a 5GHz signal and around -107dBc/Hz@1MHz for a 4GHz signal. I have attached the images. What could be the possible reason for the increase in the phase noise number with reduction in oscillation frequency?