eigen

Newbie level 3

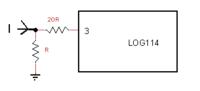

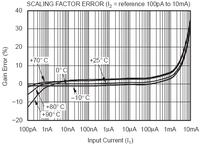

I need to measure a time-dependent current signal between 1uA and 20mA. I used a current log amplifier LOG114 from TI. It worked okay for low current level. But as long as the current passed 1mA, there were significant errors/nonlinearity, and the suggested error correction method in LOG114's manual was not good enough. So I am thinking of attenuating the current by at least 20 times to make LOG114 perform in comfort zone. Can anyone suggest a way of doing this, or alternative solution to this problem?