Paul98

Member level 5

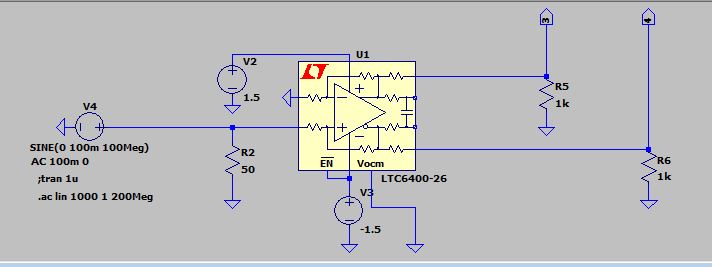

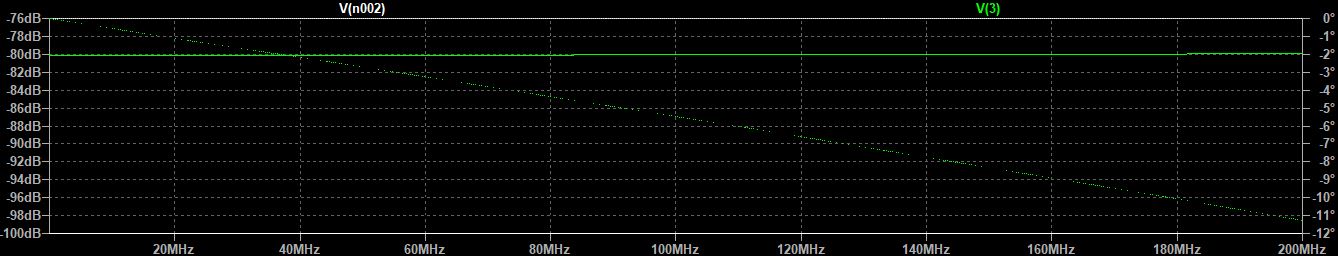

Hello, I need help if possible. At the output of this circuit I have a phase shift of about 11 ° at 200Mhz. Is there a practical way to reduce it or rather eliminate it? I tried to apply a feedback resistor to the output but without success. At least without losing income. Thanks!.