nagle

Junior Member level 1

I've been through several iterations of a specialized switching power supply. It underperforms its LTSPICE model by a factor of about 2, and I don't understand why. Here's the schematic:

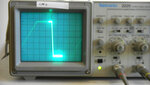

These have a selector electromagnet with an inductance of about 5.5H and resistance of 220 ohms. To pull this in takes about 120V at 60mA for about 2ms to overcome the inductance, after which about 12V is enough to sustain.

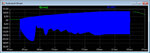

So the circuit charges up a 2uf capacitor to 120V while the data signal is 0, and when the data signal goes from 0 to 1, dumps the cap into the output. The cap must be charged in less than 22ms (that's one bit time at 45 baud). The whole thing runs from a USB port, so only 500mA at 4.8V is available.

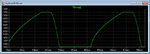

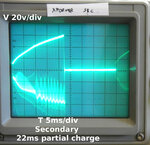

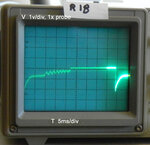

The design is standard; there's a transformer (Coilcraft FA2469-AL) connected to ground through a MOSFET, which is being driven by a 50% duty cycle square wave at about 100KHz. On the output side, there's a diode, so the voltage in the caps ratchets up, much like a photoflash power supply.

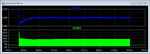

All this works, but it takes about 40-50ms to charge the cap to 120V, instead of the 20ms or so that LTSpice predicts. I need more output, and can't figure out how to get it, or why the sim is so far off.

Things I've tried:

- On the real PC board, shorting out all that spike filtering and resistance on the high side of the transformer primary. (That's there to protect the USB port of the computer driving this.) That increases the output by about 20-30%.

- Increasing the MOSFET gate drive. No effect.

- In simulation, changing the switching frequency over a factor of 2 above and below 100KHz doesn't do much.

This is my first switcher, so I don't really know what I'm doing. But clearly I'm doing something wrong.

The project is in KiCAD on Github, here: https://github.com/John-Nagle/ttyloopdriver

LTSpice model is here: https://github.com/John-Nagle/ttyloopdriver/blob/master/circuitsim/ttydriver.asc

These have a selector electromagnet with an inductance of about 5.5H and resistance of 220 ohms. To pull this in takes about 120V at 60mA for about 2ms to overcome the inductance, after which about 12V is enough to sustain.

So the circuit charges up a 2uf capacitor to 120V while the data signal is 0, and when the data signal goes from 0 to 1, dumps the cap into the output. The cap must be charged in less than 22ms (that's one bit time at 45 baud). The whole thing runs from a USB port, so only 500mA at 4.8V is available.

The design is standard; there's a transformer (Coilcraft FA2469-AL) connected to ground through a MOSFET, which is being driven by a 50% duty cycle square wave at about 100KHz. On the output side, there's a diode, so the voltage in the caps ratchets up, much like a photoflash power supply.

All this works, but it takes about 40-50ms to charge the cap to 120V, instead of the 20ms or so that LTSpice predicts. I need more output, and can't figure out how to get it, or why the sim is so far off.

Things I've tried:

- On the real PC board, shorting out all that spike filtering and resistance on the high side of the transformer primary. (That's there to protect the USB port of the computer driving this.) That increases the output by about 20-30%.

- Increasing the MOSFET gate drive. No effect.

- In simulation, changing the switching frequency over a factor of 2 above and below 100KHz doesn't do much.

This is my first switcher, so I don't really know what I'm doing. But clearly I'm doing something wrong.

The project is in KiCAD on Github, here: https://github.com/John-Nagle/ttyloopdriver

LTSpice model is here: https://github.com/John-Nagle/ttyloopdriver/blob/master/circuitsim/ttydriver.asc

Last edited by a moderator: