cmos_ajay

Full Member level 2

Hello,

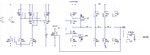

I have a CMOS oscillator based on schmitt trigger and a chain of inverters. A capacitor is being charged by a current source. The output of the last inverter is fed to the gate of a NMOS which discharges a capacitor. The output of the last inverter is a series of thin pulses at 720kHz which is fed to the D flip flop to generate a square wave at 360kHz.

Supply voltage = 1.4V , total current = 1.5uA, oscillator freq. = 360kHz

If the supply voltage is increased to 2.5V, then the oscillator frequency changes by 40 percent since the schmitt trigger switching voltages change. Is there a circuit that can minimize this change in frequency with supply voltage ? Any suggestions or ideas will be appreciated.

Attached is a schematic of this oscillator.

Thanks and regards.

I have a CMOS oscillator based on schmitt trigger and a chain of inverters. A capacitor is being charged by a current source. The output of the last inverter is fed to the gate of a NMOS which discharges a capacitor. The output of the last inverter is a series of thin pulses at 720kHz which is fed to the D flip flop to generate a square wave at 360kHz.

Supply voltage = 1.4V , total current = 1.5uA, oscillator freq. = 360kHz

If the supply voltage is increased to 2.5V, then the oscillator frequency changes by 40 percent since the schmitt trigger switching voltages change. Is there a circuit that can minimize this change in frequency with supply voltage ? Any suggestions or ideas will be appreciated.

Attached is a schematic of this oscillator.

Thanks and regards.